Speech and voice are clearly the next big battleground for generative AI, and a number of companies are hard at work developing models that can understand and replicate natural voice patterns. And while ChatGPT Voice and the like could change storytelling forever, Microsoft claims to have reached the pinnacle of voice generation: human parity.

In fact, the company’s researchers say their text-to-speech (TTS) generator, VALL-E 2, is so advanced that it would be irresponsible and dangerous to make it public. According to a research paper (discovered by our sister magazine LiveScience), the generator needs just a few seconds of audio to reproduce a voice that is indistinguishable from a human one.

To put this in perspective, Microsoft scientists are convinced that the speech generated by VALL-E 2 matches or exceeds the quality of a human voice when compared to the audio samples from the LibriSpeech and VCTK speech libraries.

“VALL-E 2 represents the latest development in speech models using neural codecs and marks a milestone in zero-shot text-to-speech (TTS) synthesis, achieving human parity for the first time,” the researchers wrote. “In addition, VALL-E 2 consistently synthesizes high-quality speech, even for sentences that have traditionally been challenging due to their complexity or repetitive phrases.”

While the first generation model sounds stilted, there is no denying that VALL-E 2 replicates the speaker’s resonance and articulation exceptionally well.

Although the researchers are not making the model public (more on that later), they have provided several audio examples to listen to in a blog post about the project. You can hear a speaker prompt from LibriSpeech and then the resulting generation of a completely new (complex) sentence from the VALL-E and VALL-E 2 generators.

And even though the first generation model sounds stilted, there is no denying that VALL-E 2 replicates the speaker’s resonance and articulation exceptionally well.

How does it work?

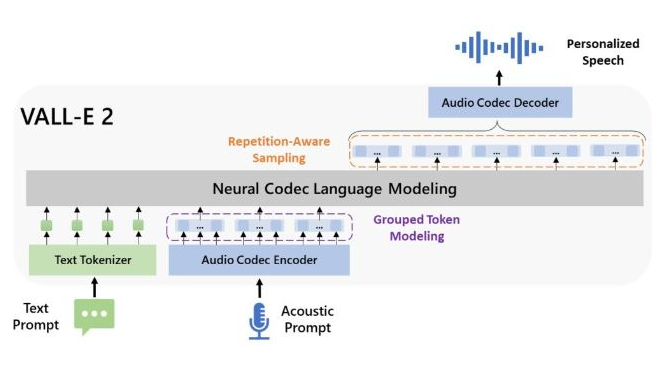

Microsoft’s VALL-E 2 TTS generator uses two special features to achieve its impressive result: “Repetition Aware Sampling” and “Grouped Code Modeling”.

The first is designed to make the output sound smoother by addressing performance issues related to repeating small parts of words or phrases (called tokens) that can cause problems for an AI. For example, consider a sentence with a lot of alliteration.

The second feature also improves efficiency, but by reducing the number of individual tokens that the model processes in a single input sequence.

“VALL-E 2 outperforms previous zero-shot TTS systems in terms of speech robustness, naturalness, and speaker likeness,” the researchers wrote in the blog post. “VALL-E 2 can produce precise, natural speech in the exact voice of the original speaker, comparable to human performance.”

Too dangerous?

Although Microsoft claims there are potential uses for an AI speech generator with this output quality, such as speech generation for people with aphasia or amyotrophic lateral sclerosis, the company is currently limiting itself to research.

“Currently, we have no plans to incorporate VALL-E 2 into a product or expand access to the public,” the scientists wrote. This is in part due to the potential for misuse that could occur if the general public could use it. In an ethics statement at the end of the paper, the researchers wrote that their creation “could pose potential risks if the model were misused, such as falsifying voice recognition or imitating a specific speaker.”

This is not unique to Microsoft. OpenAI, the developers of ChatGPT, have also introduced restrictions on some of their voice technologies and developed a deepfake detector designed to help users identify when images were created using AI. Whether VALL-E 2 (or its successor) will remain under wraps remains to be seen. The AI race will intensify in the coming months and years, and companies and academics will no doubt feel the pressure to push the boundaries.